Academic Integrity in the Age of Generative AI

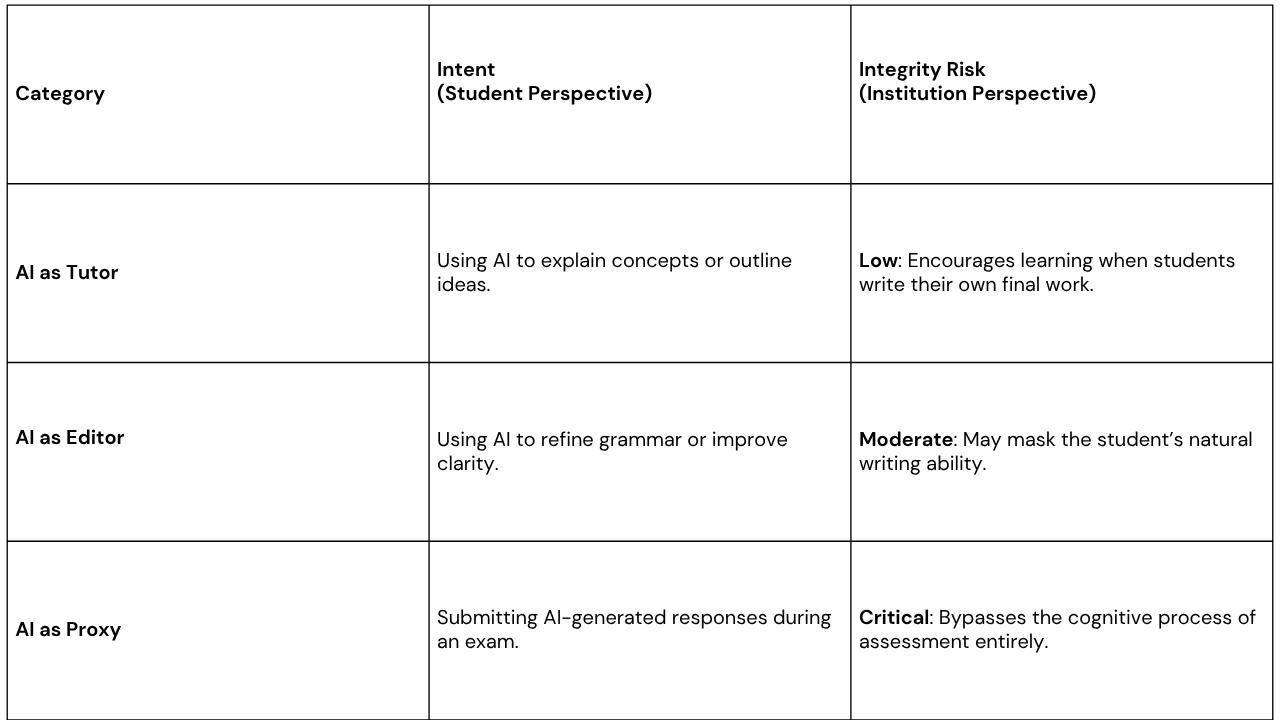

When discussing academic integrity in the age of generative AI, the conversation often focuses on “catching” students using tools like ChatGPT. But the real challenge for institutions runs deeper: distinguishing between AI as a learning assistant and AI as a proxy for independent thinking.

Generative AI has blurred the line between academic assistance and misconduct. Students increasingly rely on AI tools to brainstorm ideas, explain difficult concepts, and structure their work. Because of this shift, the assumption that “all AI use is cheating” is no longer practical or enforceable.

Instead, focus must be on a more meaningful objective: ensuring that assessments still measure authentic understanding and individual performance.

Protecting academic integrity in this new environment requires moving beyond simple detection toward systems that can validate how knowledge is demonstrated during an assessment.

The Current Landscape: Usage vs Misuse

Understanding these distinctions is essential. The objective is not to eliminate AI from education, but to ensure that formal examinations still reflect the student’s own knowledge and skills.

Rethinking Integrity in a Digital Assessment Environment

Traditional proctoring systems were designed to detect physical forms of cheating, such as hidden notes, mobile phones, or another person in the room. Generative AI introduces a different challenge.

AI tools can be accessed through browser extensions, minimized windows, or secondary devices, making them difficult to detect through traditional monitoring methods alone.

This shift requires a smarter approach, one that focuses on assessment integrity signals rather than constant surveillance.

From Surveillance to Event-Driven Validation

Modern proctoring technology is moving toward event-driven validation, where AI systems focus on detecting meaningful disruptions to the assessment process rather than continuously recording students.

Instead of collecting hours of unnecessary video footage, the system identifies and flags specific events that may indicate a potential integrity issue.

Monitoring the Digital Environment

Rather than focusing only on visual monitoring, advanced systems analyze the digital environment of the exam session. Indicators such as unauthorized browser activity, repeated window switching, or unusual system behaviour can signal attempts to access external tools during an exam.

This allows institutions to identify potential integrity risks without intruding on the student’s personal space.

Identifying Behavioral Pattern

Generative AI usage can sometimes leave subtle behavioral signals, such as repeated copy-paste actions, unusually rapid answer completion, or abrupt changes in response structure.

By analyzing these signals, institutions gain clearer evidence of possible process bypassing, rather than relying on suspicion or intrusive monitoring.

Maintaining Institutional Oversight

While AI can help identify potential integrity events, final decisions must remain with academic staff. Fully automated disciplinary decisions can create legal and ethical risks, particularly in complex situations involving AI tools.

A human-in-the-loop model ensures that technology supports institutional governance rather than replacing it.

Future-Proofing Academic Integrity

The credibility of any degree ultimately depends on the integrity of its assessments. As generative AI continues to evolve, universities must adapt their assessment strategies to ensure that qualifications remain trusted and meaningful.

By combining intelligent proctoring technology, responsible AI monitoring, and institutional oversight, institutions can create secure digital examination environments where students demonstrate their own knowledge, not the knowledge of a machine.

Conclusion

Generative AI is transforming education, but it does not have to weaken academic standards. Institutions that adopt smarter, evidence-based approaches to assessment integrity can embrace AI innovation while preserving fair, transparent, and credible examinations.

Is Your Integrity Strategy AI-Ready?

Protecting academic standards in the AI era requires more than traditional monitoring, it requires smarter validation of the assessment process.

Eye’s AI-powered, event-driven proctoring technology helps institutions safeguard exam integrity while supporting fair and trusted digital assessments.

Book a Demo to see how: https://www.eyeproctor.com/

Subscribe now.

Get actionable insights and product updates that help you run more secure, scalable remote exams.

Eye enables fair, transparent proctoring through intelligent, event-driven monitoring. Built to protect trust for candidates and institutions

Get in touch

Copyright 2026 © Eye Team. All Right Reserved.